The Danger of AI 'Vibe Coding' in Production: The Illusion of Competence

~ 4 min read

AI coding tools are now astonishingly capable.

They can generate working code, fix bugs, suggest architecture, and help teams ship faster than ever before. Used correctly, they can dramatically improve developer productivity.

But I recently encountered a situation that reinforced something important:

AI-assisted coding without trained engineering oversight creates an illusion of competence, and that illusion is dangerous.

This post is not anti-AI. I use AI tools daily and find them incredibly useful.

But using AI to modify a production system without engineering discipline is not acceleration. It is risk multiplication.

The situation

Here is what I saw when I was asked to help with some application upgrades.

The application:

- a public-facing UK website

- with a solid growing user base

- run by a startup that has had national TV coverage

- currently runs on unsupported framework and software versions

It became apparent that non-technical but intelligent users had been:

- using Claude Code to generate fixes

- uploading changes via SFTP to staging (no local dev env)

- manually testing them

- committing only to the staging branch

- syncing files directly to the production filesystem from staging

The risks were obvious:

- no code review

- no automated tests

- no controlled deployment process

- no build scripts run

- no documentation

“If it works, ship it.”

The production problem

The production system could not be reconstructed from source control with any guarantee.

Why?

- Git branches out of sync, for example the production branch behind staging

- production containing changes not reflected in version control

- no documented Docker development environment setup

- Docker containers using

latestinstead of pinned versions of key dependencies, allowing them to drift ahead of what could run in production - no example

.envfiles, so environment configuration is undocumented

This creates a situation where:

- production state cannot be trusted

- rollback becomes impossible

- debugging becomes guesswork

- upgrades become dangerous

Software engineering depends on reproducibility. This system had none.

Accidental stability is not reliability

The server was running PHP 8.1.

However, dependency inspection showed some installed libraries required PHP 8.2 as a minimum.

The application still worked.

Purely by chance.

This is what I call accidental stability:

- the system works

- nobody knows why

- nobody knows when it will stop working

This is not reliability. It is a ticking clock.

Manual testing as the only safety net

Changes were validated using manual testing alone.

This creates huge blind spots:

- security regressions

- edge-case failures

- data integrity issues

- performance degradation

- subtle runtime problems

- future compatibility issues

Manual testing confirms happy paths. Production systems fail on edge cases.

Trial-and-error incident response

If production broke, the recovery plan was simple:

The non-technical users would fix issues by trial and error.

This introduces:

- unpredictable downtime

- risk of making incidents worse

- slow recovery

- no reliable debugging strategy

- operational fragility

Software systems need deterministic recovery processes. Trial and error is not a strategy.

The real problem: the illusion of competence

The most striking part of the experience was the confidence.

The users making changes appeared highly confident in AI-generated fixes, despite having no technical background.

This is the core danger:

AI produces working output that feels like understanding.

But:

- AI fluency is not engineering knowledge

- working code is not safe code

- a successful deployment is not system stability

- confidence is not competence

AI lowers the barrier to making changes. It does not remove the need to understand the consequences.

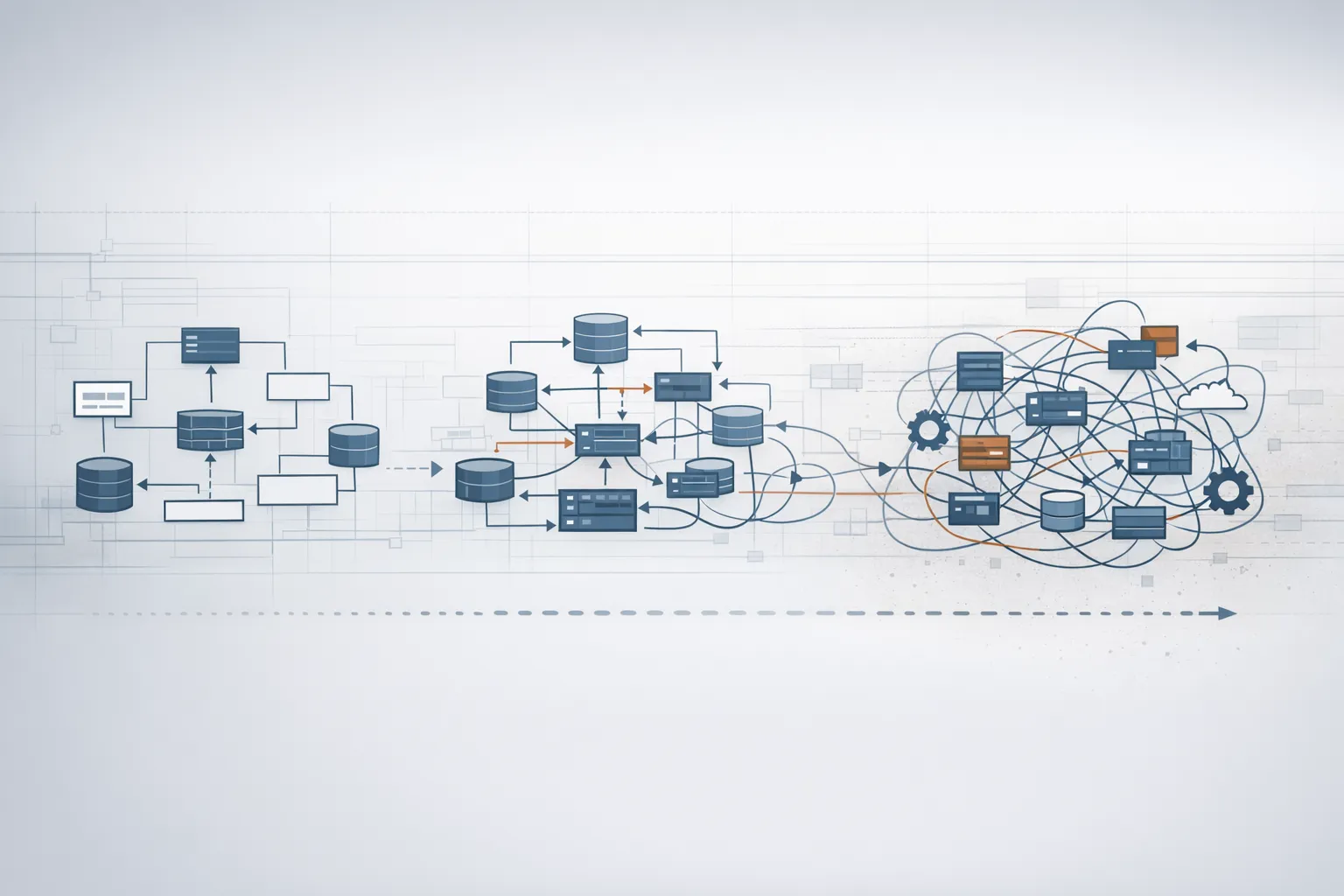

Long-term risk: architecture drift

The immediate risks are concerning, but the long-term risks are worse:

- architecture slowly degrades

- inconsistent patterns accumulate

- technical debt compounds

- upgrade costs increase

- system behaviour becomes unpredictable

- eventual rewrite pressure

Architecture rarely collapses overnight. It erodes gradually until repair becomes impractical.

Unsupervised AI changes speed up this process.

Why this happens

Modern AI systems are excellent at:

- generating plausible solutions

- fixing surface-level problems

- producing convincing explanations

- providing fast answers

For non-technical users, this creates a powerful feedback loop:

- AI suggests a solution

- the system appears to work

- confidence increases

- the process repeats

The user feels capable. The system becomes fragile.

Where AI coding is appropriate

AI is not the problem.

Lack of engineering discipline is.

AI works extremely well when:

- used by trained developers

- code is reviewed

- changes follow architectural standards

- tests validate behaviour

- environments are controlled

- long-term maintainability is considered

AI is a multiplier for expertise. It is not a replacement for it.

What startups and CTOs should understand

Software is complex.

AI is increasingly good at making changes quickly. But speed without discipline creates systemic risk.

Without trained oversight:

- systems become unmaintainable

- bugs accumulate silently

- architecture degrades

- security risk increases

- future development slows dramatically

Every production system needs:

- version control discipline

- reproducible environments

- code review

- a deployment process

- architectural oversight

- engineering accountability

These are not optional processes. They are what make software sustainable.

Final thoughts

AI coding tools are transformative.

But they introduce a new kind of risk:

Confidence grows faster than understanding.

When non-technical users modify production systems with AI assistance, they gain the power to change complex systems without the knowledge required to maintain them.

The result is not innovation. It is a fragile system that nobody fully understands.

Use AI.

But use it with engineering discipline.