Why AI Coding Has Split Software Engineers Into Two Camps

~ 7 min read

AI coding tools have advanced quickly enough that most engineers now know someone who uses them heavily and someone else who refuses to touch them beyond autocomplete, if that.

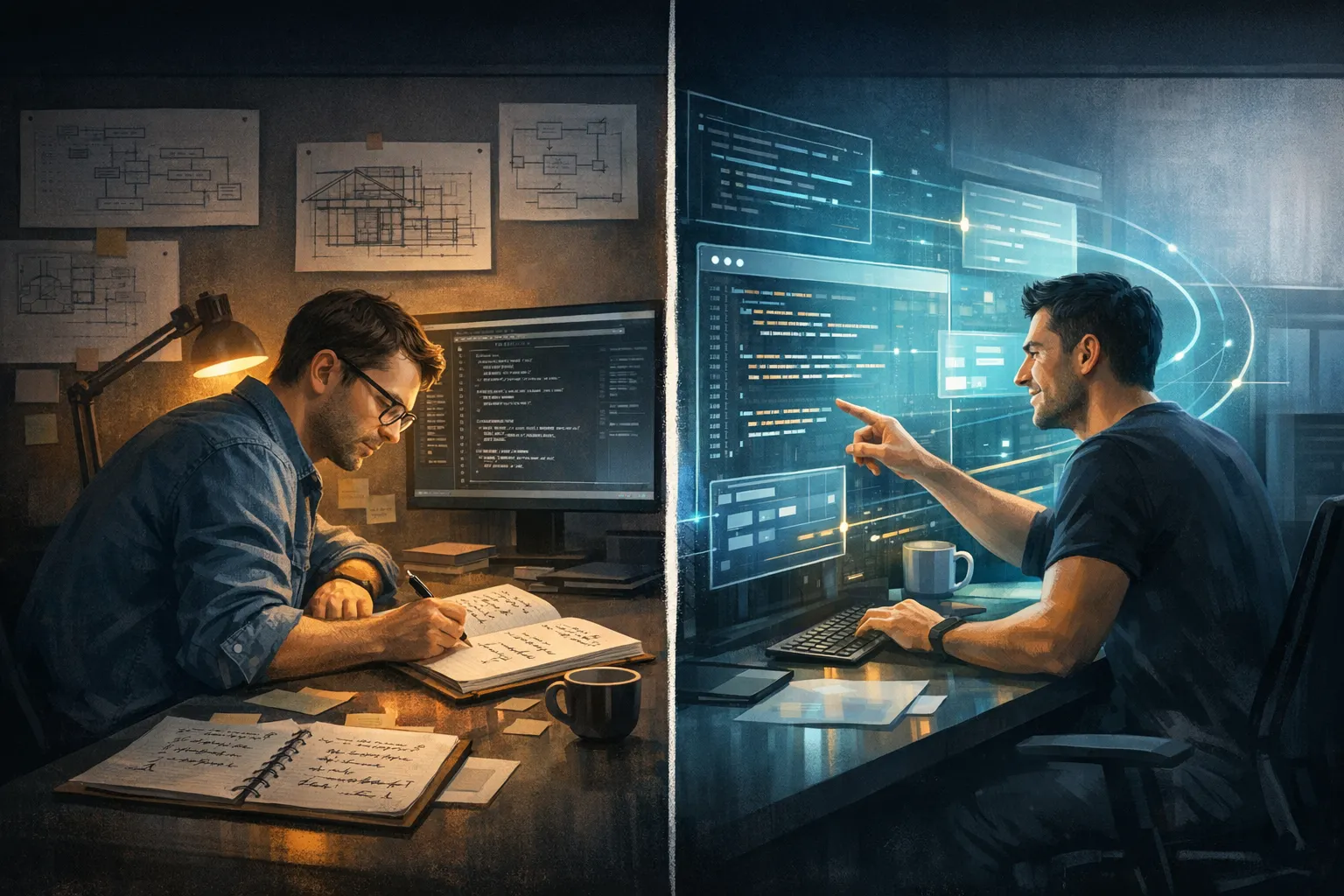

That divide is not really about whether the tools can produce code. They clearly can. The real split is about what different engineers think software engineering is for, where value comes from, and what risks are acceptable in exchange for speed.

In practice, that has created two broad camps: people who see AI coding as the obvious next leverage layer, and people who see it as a fast route to worse systems, weaker teams, and shallower engineering.

The First Camp: Outcome-First Adopters

The pro-AI camp is usually not claiming the tools are perfect.

Their argument is simpler: the productivity gain is already large enough that refusing to use AI feels like refusing to use search, tests, or version control when those shifts first arrived.

These engineers tend to care most about:

- Moving faster on routine implementation

- Offloading boilerplate, refactors, and repetitive debugging

- Working at a higher level of abstraction

- Increasing the output of smaller teams

From that perspective, AI coding is not about romance or purity. It is about throughput.

If a tool can draft a migration, write a test skeleton, explore a codebase, or fix a boring class of defects in minutes, then the practical question becomes: why would a professional ignore that leverage?

This camp often experiences AI as relief. Less time typing. Less time context switching. Less time doing work that feels mechanical compared with architecture, trade-offs, product thinking, or validation.

The Second Camp: Craft-First Resisters

The resistant camp is not simply anti-technology.

Most of the time, their concern is that AI changes the economics of software work faster than it improves the quality of the output. They are looking at second-order effects, not just demo speed.

These engineers tend to worry about:

- Code that works locally but does not fit the surrounding system

- More review effort shifted onto senior engineers

- Technical debt arriving faster than teams can understand it

- Skill atrophy in juniors who learn to prompt before they learn to reason

- Pressure from management to substitute generated output for real engineering judgment

For this camp, the problem is not that AI can help. It is that it can help just enough to be dangerous.

That is especially true in environments where leaders measure velocity badly. A manager can look at faster ticket closure and conclude the tool is a pure win, while the hidden costs land later as brittle architecture, weak ownership, and expensive clean-up.

To engineers who already spend their time untangling other people’s shortcuts, AI can look less like leverage and more like an accelerant for mess.

Why The Argument Feels So Emotional

Plenty of engineering debates are technical. This one is partly personal.

AI coding does not only introduce a new tool. It challenges a developer’s idea of what the job is.

If you believe programming is primarily about solving business problems, then AI is a natural extension of abstraction. You describe intent more directly, let the machine handle more of the implementation, and keep human attention on judgment.

If you believe programming is also a craft built through deliberate practice, then handing more of the implementation to AI can feel like bypassing the very process that creates good engineers.

That is why the same tool can feel liberating to one person and corrosive to another.

The split is not just about code quality. It is about identity:

- Is the engineer mainly a builder of systems, or a manager of machine-generated output?

- Is writing code the core skill, or is it becoming a lower-level implementation detail?

- Is adoption a sign of adaptability, or a willingness to trade understanding for convenience?

When a tool forces those questions into the open, the disagreement stops being shallow very quickly.

Trust Is Unevenly Distributed

Another reason the field has split is that AI coding does not fail obviously every time.

Sometimes it really does save hours. Sometimes it creates a clean first draft that an experienced engineer can review quickly. Sometimes it helps someone break through a local blockage and move on.

But the failure mode is asymmetric.

One wrong dependency, one hallucinated assumption, one security issue, or one subtle mismatch with existing patterns can create costs that arrive later and land on someone else.

That makes trust highly dependent on context.

An experienced engineer in a strong codebase with tests, review discipline, and clear architecture may get a lot of value from AI coding.

A weaker team with poor standards, shaky ownership, and delivery pressure may get a lot of plausible-looking damage at high speed.

Both groups can be reporting honestly. They are often just describing different environments.

Career Anxiety Makes The Divide Worse

There is also a labour-market layer underneath the technical arguments.

Senior engineers may worry that AI makes organisations even less willing to invest in junior growth. Junior and mid-level engineers may worry that the apprenticeship path gets thinner if more of the entry-level implementation work is automated away.

That matters because software engineering has traditionally developed people through repetition:

- Write the code

- Break the code

- Debug the code

- Learn the trade-offs

If AI removes too much of that loop too early, some engineers fear the industry will end up with fewer people who truly understand what they ship.

At the same time, people who embrace AI often see the opposite risk: that resisting the tools will make engineers less competitive, slower, and increasingly detached from how modern teams actually work.

So both camps think they are defending the future of competent engineering. They just disagree about what threatens it more.

Both Camps Are Seeing Something Real

The adopters are right that AI coding is already useful.

The resisters are right that usefulness is different from safety, maintainability, or sound team design.

That is why the strongest position is probably neither full enthusiasm nor blanket rejection.

AI coding works best when it sits inside a mature engineering system:

- Clear ownership

- Strong review culture

- Good tests and verification

- Sensible security controls

- Engineers who still understand the code they accept

Without those conditions, the tool can turn existing weaknesses into faster failures.

With those conditions, it can remove a large amount of low-value toil.

What The Divide Is Really About

At a deeper level, this is a disagreement about where engineering value lives.

One side increasingly believes value lives in problem framing, system design, validation, and shipping outcomes. In that view, writing every line by hand matters less each year.

The other side believes value still lives in close contact with implementation, because understanding emerges from doing the work, not just supervising it.

Those are not trivial differences. They lead to different instincts about hiring, training, code review, team design, and what it means to be a good engineer.

That is why AI coding has not simply been adopted like a better editor plugin. It has exposed a philosophical split that was already there.

Conclusion

AI coding has divided software engineers because it is not just another tool upgrade.

It changes speed, yes, but it also changes trust, learning, status, identity, and the boundary between understanding and delegation.

The pro-AI camp sees leverage that is already too large to ignore.

The resistant camp sees a profession at risk of confusing faster output with better engineering.

Both are reacting to something real. The teams that handle this shift well will probably be the ones that avoid the two worst mistakes: blind adoption and performative resistance.

The better path is harder. Use AI where it genuinely increases leverage but keeps human judgment, verification, and technical ownership non-negotiable.

That does not end the divide, but it does make it more productive.